Why helpful vs harmful matters

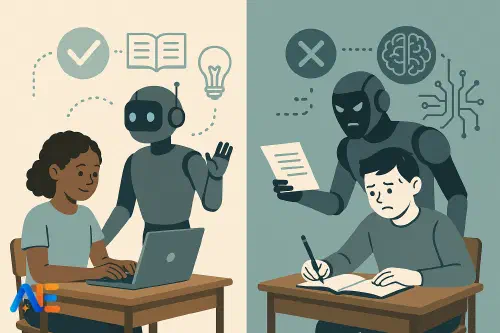

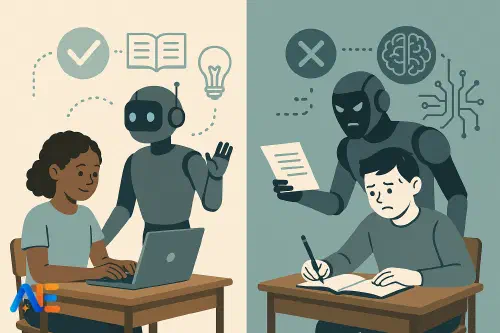

As AI tools spread through classrooms and homework, the key question is shifting. It is no longer simply whether pupils should use AI, but when that use genuinely supports learning and when it quietly undermines it. The same tool that gives a struggling reader a helpful explanation can also rob a motivated sixth former of the hard thinking that would have built lasting understanding.

Teachers are already grappling with this tension. Some fear that any AI support is “cheating”; others worry pupils will be left behind if they never learn to work with AI. The reality sits between these extremes. Schools need a shared, developmental framework that distinguishes AI as scaffold from AI as shortcut, grounded in what we know about memory, metacognition and effortful learning.

This is not just about assessment integrity or plagiarism, though those matter too and connect closely with AI-resilient assessment design. It is about protecting the conditions in which deep learning happens, while still preparing young people for a future where AI will be a normal tool in study and work.

What learning science tells us

Cognitive science offers three particularly useful ideas for thinking about “helpful vs harmful” AI use.

First, learning is change in long-term memory. For knowledge and skills to stick, pupils must actively process information, connect it to what they already know and revisit it over time. If AI does too much of that processing, pupils can feel successful in the moment but retain very little.

Second, productive struggle is essential. Tasks that are slightly effortful, but not overwhelming, lead to stronger learning. When AI removes all difficulty, it also removes the conditions that build resilience, problem-solving and conceptual understanding.

Third, metacognition matters. Good learners plan, monitor and evaluate their own thinking. If AI always decides the next step, pupils miss opportunities to choose strategies, reflect on errors and learn how they learn.

From these principles, we can draw a simple conclusion: AI is most helpful when it supports pupils to think, and most harmful when it thinks instead of them.

For a broader view of how AI literacy fits into curriculum planning, you may find it useful to read about AI literacy in schools alongside this framework.

A simple decision framework

A practical way to decide whether AI should scaffold or step back is to ask three questions before allowing its use on a task:

Is this a learning task or a performance task?

If the aim is to build understanding or practise a skill, AI should support, not replace, the core thinking. If the aim is to present polished work (for example, final layout or translation for an audience), more AI assistance may be appropriate.

Where is the desirable difficulty?

Identify the “thinking heart” of the task. That is the part pupils must do themselves, even if it is slower or messier. AI can help with the edges: clarifying instructions, giving examples or checking for errors afterwards.

What will pupils remember in six weeks?

If AI use means they will remember how to use a tool but not the underlying concept or process, the balance is off. AI should amplify, not replace, the mental work that leads to durable learning.

This framework can be adapted for different ages. What counts as “the thinking heart” of a task looks very different in a Year 2 classroom compared with a post-16 seminar.

Discover the power of Automated Education by joining out community of educators who are reclaiming their time whilst enriching their classrooms. With our intuitive platform, you can automate administrative tasks, personalise student learning, and engage with your class like never before.

Don’t let administrative tasks overshadow your passion for teaching. Sign up today and transform your educational environment with Automated Education.

🎓 Register for FREE!

Early primary (5–8)

In early primary, the priority is building core foundations: language, number sense, motor skills, attention and early self-regulation. At this stage, AI should be present but peripheral.

AI can help by reading instructions aloud, generating simple practice items or offering playful, low-stakes quizzes. A Year 1 teacher might use an AI tool to produce phonics-rich sentences tailored to the sounds the class is learning. Pupils then read, act out or illustrate those sentences away from the screen.

The key rule here is: AI can help adults design learning; it should not replace pupils’ hands-on experiences. Children in this age band should not be using AI to generate writing, solve maths problems or answer comprehension questions. Their brains need to wrestle with forming letters, counting objects and decoding text.

Classroom norms might include:

- “We use AI to create things we then do ourselves.”

- “AI helps teachers plan; children do the thinking.”

Upper primary (9–11)

By upper primary, pupils can start to interact with AI more directly, but within clear boundaries. The focus remains on curiosity, feedback and explanation, not on production.

A teacher might allow pupils to ask an AI, “Explain equivalent fractions using pizza,” then discuss which explanation is clearest and why. Or pupils might draft a paragraph themselves, then use AI to highlight unclear sentences and suggest improvements, while keeping their own ideas and structure.

Helpful rules at this stage include:

- AI can explain, give examples and suggest improvements, but it does not write full answers.

- Pupils must create first, then consult: they produce a draft, a plan or a solution before asking AI for help.

- Any AI use is followed by a short reflection: “What did I change after using AI, and why?”

These habits nurture metacognition and set expectations that AI is a tutor or coach, not a ghost-writer.

Lower secondary (11–14)

In lower secondary, pupils are ready for more explicit conversations about AI’s strengths and limitations. The goal is to support strategy and self-regulation, not to outsource thinking.

AI can be powerful for modelling approaches: for instance, showing multiple methods to solve an algebra problem, or offering alternative interpretations of a poem. A teacher might display an AI-generated solution and ask, “Where is this reasoning strong? Where is it weak?” This positions AI as a fallible partner and strengthens critical thinking.

Classroom rules might emphasise:

- No AI for first attempts on core practice tasks. Pupils try independently, then use AI to compare methods, check answers or get hints.

- AI can be used to generate practice questions at the right level of challenge, but pupils must still show full working or reasoning.

- Pupils should ask AI process-focused questions (“What steps do I take to revise for this test?”) rather than answer-focused ones (“What is the answer to question 5?”).

This is also a good stage to introduce the idea of a human–AI co-pilot model: pupils remain in the pilot seat, making decisions, while AI offers information and suggestions.

Upper secondary and post-16 (15+)

By 15 and beyond, pupils are approaching the world of work and higher education, where AI will be a standard tool. The challenge is to create an apprenticeship model: learning to work with AI while avoiding dependency.

At this stage, AI can support advanced tasks such as planning an extended essay, exploring multiple perspectives on an issue or checking the structure of a scientific report. Pupils might use AI to generate counterarguments to their thesis, then refine their reasoning in response. They might also use AI to simulate an examiner, asking, “What weaknesses might you see in this argument?”

However, the core intellectual work must remain theirs. Clear boundaries might include:

- AI can help generate ideas, but pupils must select, organise and justify which ideas they use.

- Any AI-generated text must be rewritten in the pupil’s own words, with critical evaluation of accuracy and bias.

- For key assessments, schools may specify “AI-free zones”, where pupils demonstrate unaided mastery, alongside separate tasks that explicitly assess AI collaboration skills.

These habits prepare students for a future where, as many argue in the debate around AI and cheating, integrity will be judged not just by whether AI is used, but how.

Designing AI-aware tasks

To protect deep thinking, tasks and homework need to be designed with AI in mind from the start, not patched afterwards.

One approach is to design tasks that make AI-generated answers obviously insufficient. For example, asking pupils to connect the day’s lesson to a local context, a recent class discussion or their own experiment results. AI may offer generic content, but pupils must integrate specific, lived experiences.

Another is to separate thinking phases from polishing phases. A history teacher might require pupils to handwrite a first outline of an essay in class, then allow AI-supported revision at home, with a short reflection on what changed and why.

Teachers can also use AI themselves to anticipate shortcuts. By pasting a homework question into an AI tool, you can see what a generic answer looks like, then adjust the task to demand more analysis, personal reasoning or reference to class-only materials.

Talking with students and families

Pupils and families need clear, shared language about “healthy AI use”. Framing AI as a thinking partner rather than a crutch can reduce anxiety and polarisation at home.

Schools might provide simple guidance sheets that explain:

- when AI use is encouraged (for example, to get extra explanations or practice questions)

- when it is restricted (for example, on drafts of assessed coursework)

- how pupils should acknowledge AI support, just as they would cite a book or website

Regular discussion in tutor time or assemblies can help pupils reflect on their own habits. Questions such as “When did AI make your work better this week?” and “When might it have stopped you learning?” encourage ongoing metacognition.

School-wide guardrails

Finally, schools need coherent guardrails that align classroom practice, homework, assessment and pastoral support. These do not need to be perfect from day one, but they should be explicit, revisited and informed by evidence.

Useful steps include:

- agreeing age-banded principles for AI use, like those outlined above, and building them into digital citizenship or study skills programmes

- reviewing assessment policies to ensure a balance between AI-free tasks and AI-supported tasks, as part of a broader strategy for AI-resilient assessment

- providing professional learning so staff can experiment with AI as a planning and feedback tool, while modelling the same critical stance we want pupils to adopt

When schools treat AI not as a threat or a miracle, but as a tool that must be carefully integrated into the developmental arc of learning, they can protect productive struggle and deep thinking while opening up new forms of support and challenge.

Happy scaffolding!

The Automated Education Team