Observation first

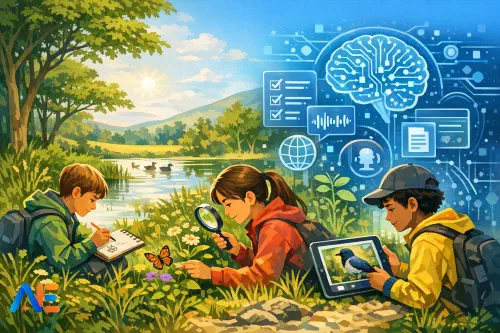

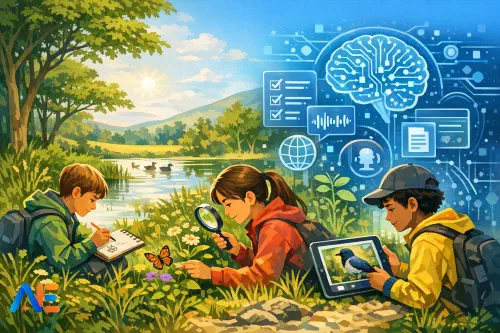

Outdoor learning thrives on attention. In spring, that might be the difference between “a bird” and “a bird with a pale eyebrow stripe, calling from the hedge, seen at 10:15”. AI can help later, but if it arrives too early it can narrow what pupils notice. When a tool offers a confident label, pupils often stop looking. They may also miss the point of fieldwork: gathering evidence, not collecting answers.

This is where outdoor learning and AI can clash. A pupil snaps a blurred photo, gets “robin” from an app, and writes it down without checking. Another uses a chatbot to “describe the habitat” and ends up with generic woodland text that does not match the scrubby patch behind the sports field. Even well-meaning adults can drift into “AI as a shortcut”, especially when time is tight.

A better approach is to treat AI as a post-visit assistant: helpful for organising, suggesting, translating, and prompting reflection, but never replacing careful observation. If you already use structured classroom routines for multimodal learning, you’ll recognise the logic in the four-channel-multimodal-ai-classroom-playbook: separate capture from processing, and be explicit about what each tool is for.

Pocket-to-paper routine

The “pocket-to-paper” routine is simple: devices stay in pockets during observation, then come out afterwards for careful processing. It works in a park, a school grounds transect, a shoreline, or a local street ecology walk. The key is that pupils know exactly when the boundary changes.

Before

Before you go out, set up roles and expectations. Pupils need a clear purpose (“Today we’re comparing habitats along a 50-metre line”) and a clear recording method (“You will sketch, describe, and tally; photos are optional later”). It helps to rehearse two micro-skills indoors: how to write a precise observation and how to sketch quickly without “art pressure”. A 90-second sketch with labels can be more useful than a perfect drawing.

Build a short safety protocol into the briefing so it becomes routine rather than a one-off warning. Keep it memorable: stop, scan, signal, share. Pupils stop before moving to a new spot, scan for hazards (traffic, water edges, stinging plants), signal to an adult if unsure, and share their location within the group’s agreed boundary. If you run practical science regularly, you can mirror the same “risk first” mindset from the ai-in-science-labs-playbook, adapted for outdoor contexts.

During

During fieldwork, the rule is “paper first”: notebooks, clipboards, printed ID sheets if you use them, and simple tally tables. Encourage pupils to write what they can support with evidence: size comparisons, colour patterns, behaviour, direction of movement, and where it was found. A pupil might write, “Small brown beetle, about 1 cm, shiny, found under a damp log, moved quickly when exposed.” That is far more valuable than “ground beetle (AI said so)”.

If you want to keep motivation high, build in quick “noticing challenges” that still prioritise observation. For example, ask pupils to find three different leaf edges and sketch each with a label, or to record two bird calls using words (e.g., “chiffchaff: ‘chiff chaff chiff chaff’”). The device stays away; the noticing stays central.

After

After the outdoor session, devices come out for a structured “processing window”. This can happen back in class, in a sheltered space, or at home with clear boundaries. Pupils use AI for three jobs: cautious identification suggestions, structured data entry, and accessible outputs (audio, simplified text, translation). The teacher’s job is to keep AI in the role of assistant, not authority.

Cautious identification

AI is good at pattern matching, but species identification is high-stakes in a learning sense: a wrong label can spread quickly. Treat AI outputs as hypotheses. Pupils should learn to ask, “How sure is this, and what would make it wrong?”

AI confidence checklist

Teach pupils a short “AI confidence” checklist they must complete before writing any species name in their final notes. Keep it visible on the desk and on your slides.

- Evidence quality: Do we have a clear photo, a detailed sketch, or a strong written description?

- Context match: Does the suggested species fit the habitat, season, and location type?

- Distinguishing features: Can we name at least two features that separate it from similar species?

- Alternative candidates: Did the AI offer lookalikes, and did we consider them?

- Independent check: Did we confirm using a trusted field guide or reputable website?

- Confidence label: Are we recording it as “confirmed”, “probable”, or “possible”?

You can make this concrete with a classroom example. Suppose a pupil photographed a white flower in grass and AI suggests “daisy”. The checklist forces better thinking: are the petals evenly spaced, is the flower head the right size, are there similar species nearby (e.g., chamomile-like flowers), and do we have enough detail? Often the best outcome is “possible daisy species”, with a note about what evidence is missing.

Verification steps

Build verification into the workflow, not as an optional extension. Ask pupils to paste the AI suggestion into their notes, then add a “because” line using their own evidence: “AI suggested X because it has Y; I observed Y and Z; I am only 60% sure because the photo is blurred.” This turns AI into a thinking prompt.

If you want a consistent classroom move, align it with your wider planning routines, such as the checking steps in ai-across-the-curriculum-lesson-moves-planning-template: claim, evidence, check, revise.

Data logging safely

Fieldwork data is powerful, but it can also overshare. A pupil’s photo can include faces, school logos, or a recognisable location. A shared document can accidentally publish precise coordinates of a rare species or a pupil’s route home. “Minimum data” is a good default: collect what you need for learning, and nothing more.

A practical approach is to use a simple template that separates observation from interpretation. Pupils record time (rounded), weather, habitat type, and a location description that is not pinpointed (“north edge of the field near the hedge”, not a map pin). For younger pupils, even “sunny/shady” and “dry/wet” can be enough to support good comparisons.

AI can help here by turning messy notes into a tidy table, but only if you control what goes in. Encourage pupils to type or paste only the necessary text, rather than uploading whole documents or images by default. If your tool allows it, switch off chat history and model training, and use class accounts rather than personal log-ins.

Discover the power of Automated Education by joining out community of educators who are reclaiming their time whilst enriching their classrooms. With our intuitive platform, you can automate administrative tasks, personalise student learning, and engage with your class like never before.

Don’t let administrative tasks overshadow your passion for teaching. Sign up today and transform your educational environment with Automated Education.

🎓 Register for FREE!

Reflection and writing

The most valuable writing after fieldwork is explanatory: what pupils think is happening, and why their evidence supports it. AI summaries can sound polished while being empty. Instead, use AI to prompt better reflection, not to write the reflection.

A strong routine is “notes to explanation”. Pupils start with three observations, then write one claim that links them. For example: “The shaded hedge base had more woodlice than the open grass.” Then they add reasoning: moisture, shelter, temperature. AI can help by asking probing questions: “What variables might explain this difference?” or “Which of your observations is the strongest evidence?” It can also help pupils structure a paragraph with sentence starters, while keeping the content theirs.

If you do allow AI to generate a model paragraph, treat it as a text to critique. Pupils highlight any sentence that is not directly supported by their notes and either delete it or add evidence. This keeps authorship and accuracy in the foreground.

Accessibility outdoors

Outdoor learning can be brilliant for inclusion, but it also introduces barriers: wind noise, glare, mobility constraints, and the cognitive load of new environments. Used thoughtfully after the observation phase, AI can remove some of those barriers without diluting the learning.

Audio is a practical example. A pupil can record a short voice note after the session, describing what they saw while it is fresh. Back indoors, AI can transcribe it into text for a notebook entry. For pupils who struggle with extended writing, this can be the difference between a few words and a detailed account. Similarly, AI can simplify a pupil’s own paragraph into shorter sentences for checking, or translate key vocabulary for EAL learners so they can participate fully in discussion.

The key is that accessibility outputs should be based on the pupil’s captured evidence. You are not using AI to invent observations they did not make; you are using it to help them express and revisit what they did.

Safeguarding and privacy

Safeguarding needs to be designed in, especially when images and location are involved. Start with consent and clarity: what will be photographed, who will see it, and where it will be stored. Make “no faces, no names, no logos” a default rule for pupil-captured images, and model how to frame shots to avoid accidental identifiers.

Location data deserves special care. Many photos include embedded GPS metadata. If pupils are using personal devices, consider asking them to switch off location tagging for the activity, or use school devices with managed settings. Avoid uploading images that show distinctive landmarks if the tool stores them externally. Where possible, use tools that allow local processing, or at least clear controls over retention and training.

Finally, teach pupils to check tool settings as part of digital literacy. A simple classroom script helps: “Before you paste anything in, ask: does this include a person, a place, or personal information?” That habit transfers well beyond fieldwork.

Ready-to-copy resources

Pupils work best when instructions are short, concrete, and repeated. Here are ready-to-copy pieces you can drop into a slide or handout.

For pupil instructions, keep the rhythm: “Pocket during noticing; paper for evidence; device later for checking.” Tell them that AI is allowed only after they have three forms of evidence (a sketch, a description, and a tally or measurement). That single rule prevents most “instant answer” behaviour.

For prompt frames, give pupils wording that demands uncertainty and evidence. For example: “Based on this description and sketch, suggest three possible species and tell me what features would confirm each.” Or: “Turn my field notes into a table with columns for habitat, evidence, and confidence (confirmed/probable/possible). Do not add new observations.” For reflection: “Ask me five questions that will help me explain my results using my notes.”

For teacher checks, focus on what matters: evidence-first notes, confidence labels, and privacy compliance. When you glance at a pupil’s work, you should be able to see their raw observations, their verification step, and a clear statement of certainty. If any of those are missing, the task is not finished, even if the writing looks polished.

May your spring fieldwork be rich in noticing and light on guesswork.

The Automated Education Team